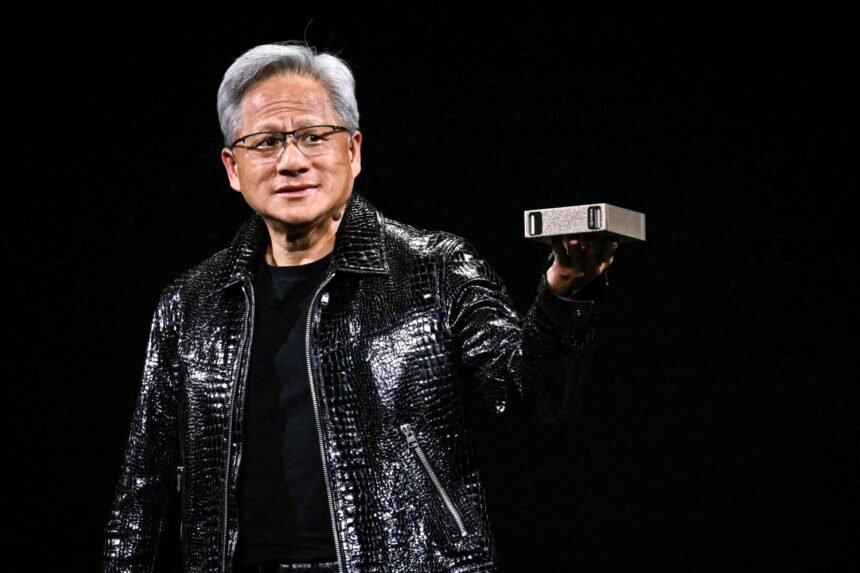

NVIDIA CEO Confirms Full Production of Next-Gen AI Chips

NVIDIA (NVDA.O) CEO Jensen Huang announced that the company’s next generation of chips is now in full production. These chips can deliver up to five times the AI computing power of Nvidia’s previous models, significantly boosting performance for chatbots and other AI applications.

Huang revealed the details during a keynote at the Consumer Electronics Show in Las Vegas. The chips, which are already being tested by AI firms in Nvidia labs, are expected to launch later this year. This comes as Nvidia faces growing competition from rivals and even some of its own customers.

The Vera Rubin Platform: High-Performance AI Computing

The new Vera Rubin platform consists of six Nvidia chips combined into a single system. Its flagship server will feature 72 graphics units and 36 new central processors. Huang also explained that multiple servers can be connected into “pods” with over 1,000 Rubin chips, improving efficiency in generating AI “tokens” by ten times.

The Rubin chips achieve this leap in performance despite only a 1.6x increase in transistor count. They use a proprietary data format that Nvidia hopes the wider industry will adopt.

Enhancing AI Model Deployment

While Nvidia continues to dominate AI model training, competition is rising from companies like AMD (AMD.O) and Google (GOOGL.O). Huang emphasized that the new chips are designed to efficiently serve AI models to hundreds of millions of users.

To support this, Nvidia introduced “context memory storage,” a new layer of memory that helps chatbots respond faster to long questions and conversations.

Networking Upgrades: Co-Packaged Optics

NVIDIA also unveiled a new generation of networking switches using co-packaged optics. This technology allows thousands of machines to connect to a single, high-speed network. It positions Nvidia against competitors like Broadcom (AVGO.O) and Cisco Systems (CSCO.O) in large-scale data center networking.

Looking Ahead

The launch of the Vera Rubin platform and supporting network technology marks a major step forward for Nvidia’s AI ambitions. With enhanced processing power, faster memory, and improved networking, the company aims to maintain its lead in AI infrastructure while expanding the adoption of AI technologies across industries.