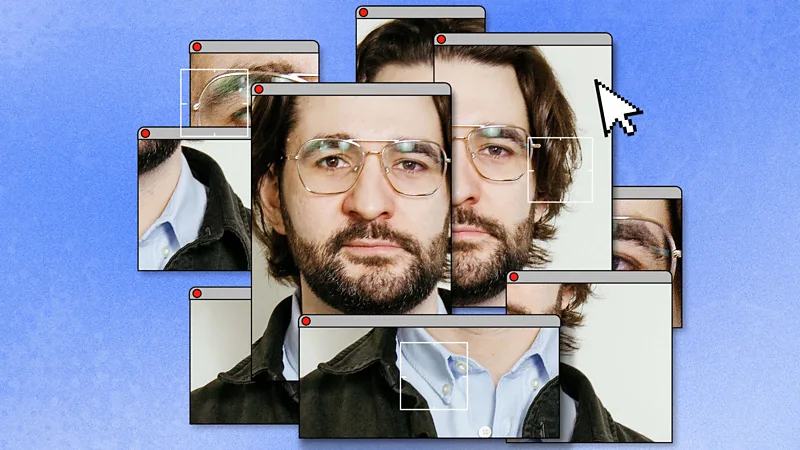

Family experiment raises new doubts

A writer tested whether a close family member could tell the difference between his real voice and an AI generated version. He told his aunt that one of two calls would be either him or a machine.

At first, she rejected the idea that AI could sound human. During the call, she noticed the voice felt familiar but slightly unusual in tone. She said real speech usually has more natural emotion shifts. Even so, she was not fully sure which was real.

The result showed how easily identity can become uncertain when synthetic voices closely copy human speech.

Deepfake fears reach public figures

The issue is not limited to private conversations. Public figures have also become part of similar confusion online.

Israeli Prime Minister Benjamin Netanyahu recently appeared in videos that triggered online speculation. Some viewers claimed visual effects such as lighting and finger positioning suggested artificial generation.

The videos quickly spread across social media, along with false claims about their authenticity.

Digital forensics experts later examined the footage in detail. They reviewed lighting, sound consistency, facial movement, and frame level details. Their findings showed no evidence of AI generation. They explained that the unusual visuals were caused by normal recording conditions such as camera angle and shadows.

Experts warn trust is weakening

Specialists in digital media say the problem is no longer only fake content. Real content is also being questioned.

Small visual errors in video or audio can now lead to widespread doubt. Even after explanations are given, some audiences continue to believe false claims.

Experts say this creates a situation where proof is no longer simple. A single video or recording is often not enough to confirm identity.

Personal trust still matters most

The writer also shared another incident involving his family. After sending a message in a group chat, his mother questioned whether it was really him. She asked for a personal detail only family would know. Once he answered correctly, she accepted it.

This shows that trust still depends heavily on personal knowledge and shared experience rather than technology alone.

No easy way to prove identity

Experts say there is currently no perfect method to prove identity using only audio or video. Both can be altered or misinterpreted.

Instead, verification is shifting toward repeated interaction, context, and personal recognition rather than single pieces of media evidence.

Conclusion

The rise of highly realistic synthetic media is changing how people judge identity. Even real recordings can be doubted, while false content can appear convincing.

Experts say the challenge is no longer just detecting fake material. It is about rebuilding trust in a digital world where seeing and hearing are no longer enough on their own.